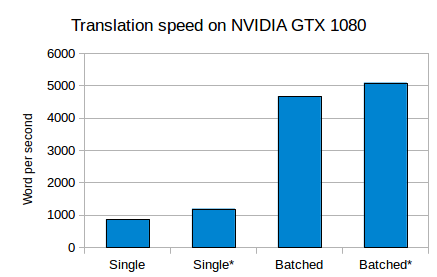

Batched translation speed is very slow compared to Fairseq · Issue #266 · marian-nmt/marian-dev · GitHub

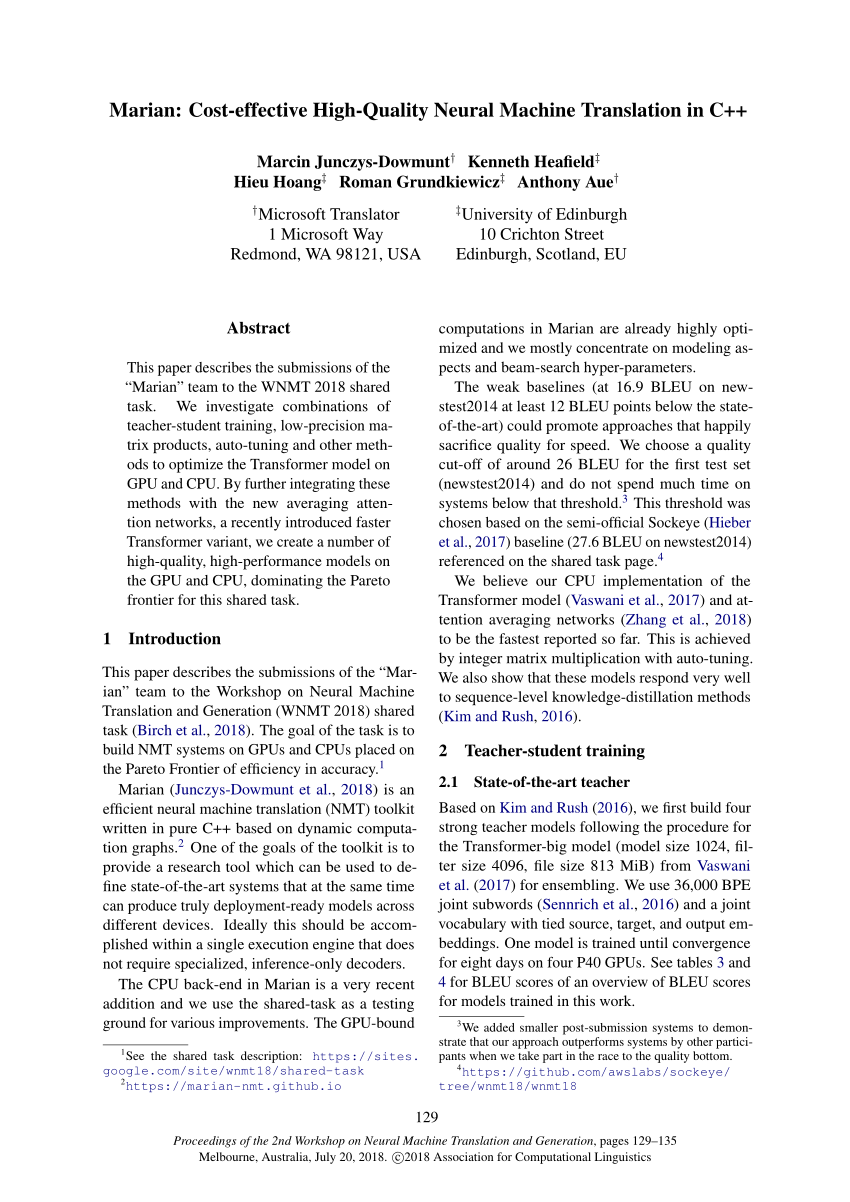

Don't load entire corpus into memory on start up (enhancement request) · Issue #148 · marian-nmt/marian-dev · GitHub

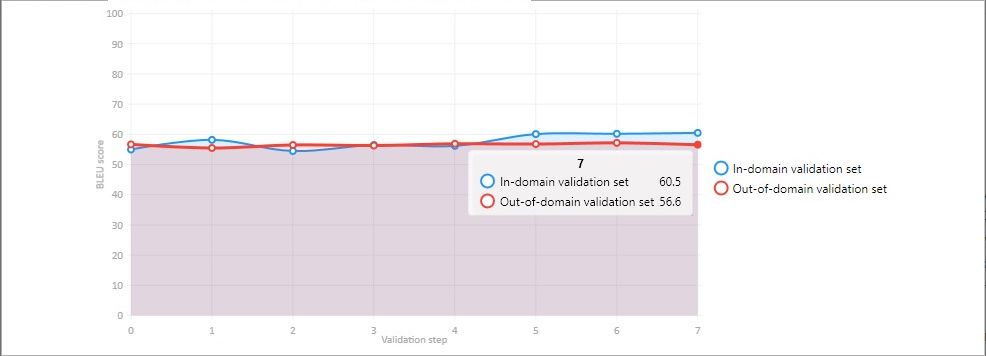

![Tuning] Results are GPU-number and batch-size dependent · Issue #444 · tensorflow/tensor2tensor · GitHub Tuning] Results are GPU-number and batch-size dependent · Issue #444 · tensorflow/tensor2tensor · GitHub](https://user-images.githubusercontent.com/15141326/33256270-a3795912-d351-11e7-83e4-ea941ba95dd5.png)

Tuning] Results are GPU-number and batch-size dependent · Issue #444 · tensorflow/tensor2tensor · GitHub